Plug in any value of X (within the range of the dataset anyway) to calculate the corresponding prediction for its Y value. 05) we have evidence to suggest a statistically significant relationship.įinally the equation is given at the end of the results section. P-values help with interpretation here: If it is smaller than some threshold (often. If not, the model's line is not any better than no line at all, so the model is not particularly useful! If it is significantly different from zero, then there is reason to believe that X can be used to predict Y. The next question may seem odd at first glance: Is the slope significantly non-zero? This goes back to the slope parameter specifically. R-square quantifies the percentage of variation in Y that can be explained by its value of X. Use the goodness of fit section to learn how close the relationship is. Our guide can help you learn more about interpreting regression slopes, intercepts, and confidence intervals. You can see how they fit into the equation at the bottom of the results section. These parameter estimates build the regression line of best fit. The first portion of results contains the best fit values of the slope and Y-intercept terms. The interpretation of the intercept parameter, b, is, "The estimated value of Y when X equals 0." The linear regression interpretation of the slope coefficient, m, is, "The estimated change in Y for a 1-unit increase of X." X is simply a variable used to make that prediction (eq.

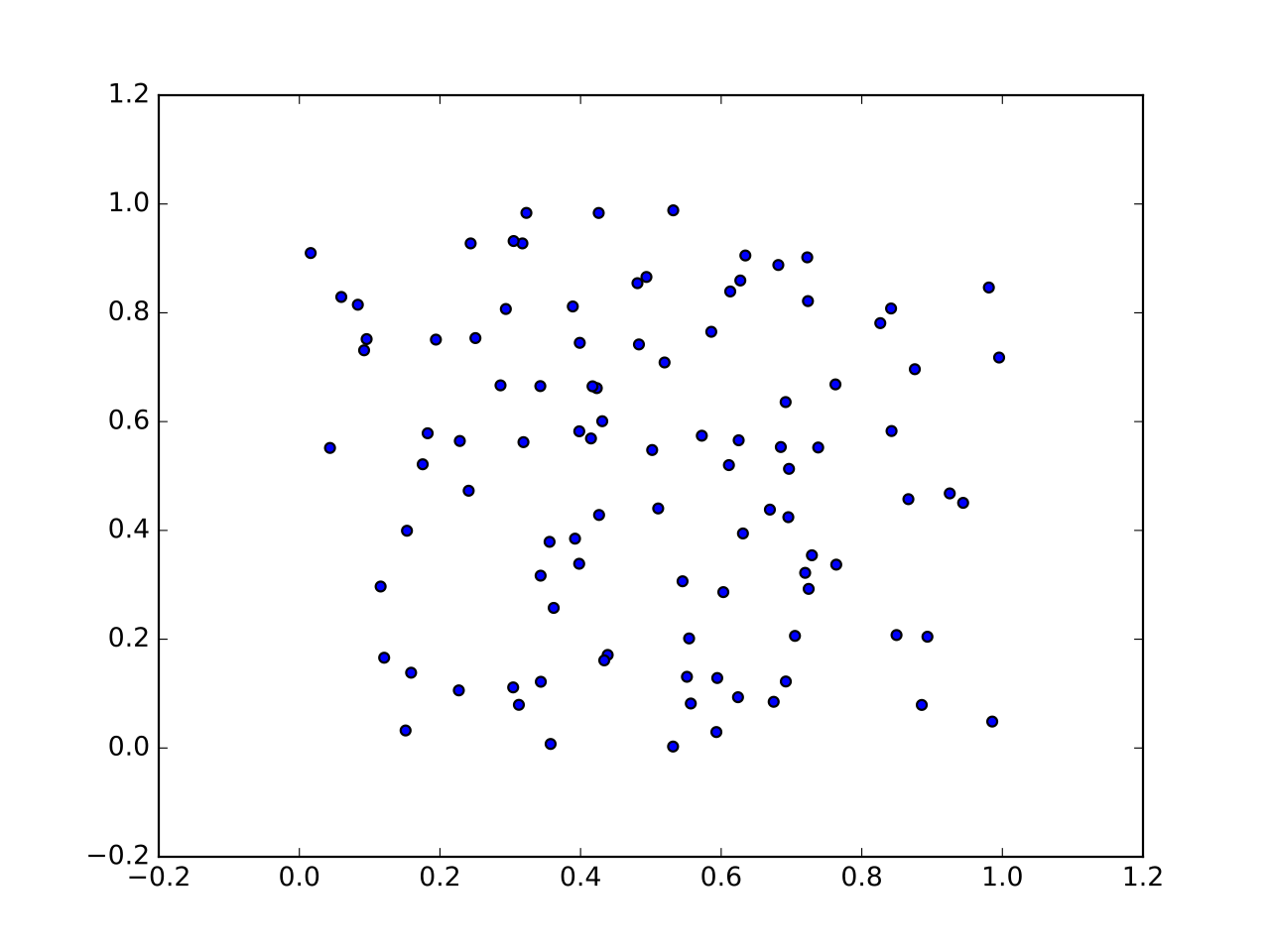

Keep in mind that Y is your dependent variable: the one you're ultimately interested in predicting (eg. The calculator above will graph and output a simple linear regression model for you, along with testing the relationship and the model equation. Linear regression calculators determine the line-of-best-fit by minimizing the sum of squared error terms (the squared difference between the data points and the line). While it is possible to calculate linear regression by hand, it involves a lot of sums and squares, not to mention sums of squares! So if you're asking how to find linear regression coefficients or how to find the least squares regression line, the best answer is to use software that does it for you.

Variables (not components) are used for estimation Have a look at our analysis checklist for more information on each: If you're thinking simple linear regression may be appropriate for your project, first make sure it meets the assumptions of linear regression listed below. The formula for simple linear regression is Y = mX + b, where Y is the response (dependent) variable, X is the predictor (independent) variable, m is the estimated slope, and b is the estimated intercept. Y = α + β x, defines a random variable drawn from the empirical distribution of the x values in our sample.Linear regression is one of the most popular modeling techniques because, in addition to explaining the relationship between variables (like correlation), it also gives an equation that can be used to predict the value of a response variable based on a value of the predictor variable. The intercept of the fitted line is such that the line passes through the center of mass ( x, y) of the data points. In this case, the slope of the fitted line is equal to the correlation between y and x corrected by the ratio of standard deviations of these variables. It is common to make the additional stipulation that the ordinary least squares (OLS) method should be used: the accuracy of each predicted value is measured by its squared residual (vertical distance between the point of the data set and the fitted line), and the goal is to make the sum of these squared deviations as small as possible. The adjective simple refers to the fact that the outcome variable is related to a single predictor. That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the x and y coordinates in a Cartesian coordinate system) and finds a linear function (a non-vertical straight line) that, as accurately as possible, predicts the dependent variable values as a function of the independent variable. In statistics, simple linear regression ( SLR) is a linear regression model with a single explanatory variable. Here the dependent variable (GDP growth) is presumed to be in a linear relationship with the changes in the unemployment rate. Okun's law in macroeconomics is an example of the simple linear regression. It has been suggested that Variance of the mean and predicted responses be merged into this article.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed